NAB Sponsored State-of-the-Art Antenna Research for "Rabbit Ear" Replacement

On August 17, NAB unveiled an impressive high tech report they had commissioned from a not well known, but very well qualified military contractor on a possible replacement for the ubiquitous “rabbit ears” used in houses that depend on over-the-air reception. It is commendable that in an effort to reverse the decline in over-the-air viewers NAB this time decided to pay for good engineering rather than the usual lawyers and lobbyists.

MegaWave Corp., of Devens, MA had done a study for NAB in 1995 on available antenna technology for improved set top performance and NAB’s FASTROAD (Flexible Advanced Services for Television & Radio On All Devices) program, a technology advocacy program, hired them again to survey new antenna technology and the opportunities resulting from decreased TV band spectrum. (MegaWave’s hometown is next to Ft. Devens, for many years a major Army Security Agency installation. So it can be surmised that they have dealt with “3 letter agencies” other than FCC.)

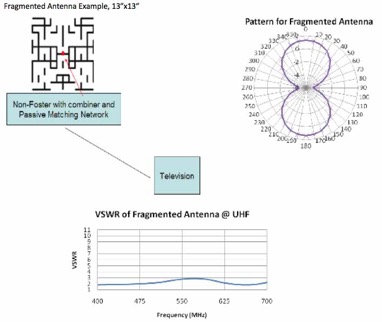

The report is fascinating for us techies, reviewing all sorts of interesting antenna technologies not well known outside of antenna researchers and the military industrial complex. The pictures at the top of this post shows one antenna they spent a lot of effort exploring and its performance.

The report points out than many advanced technologies depend on feedback from the DTV receiver on how well it is receiving the signal. In 2007 CEA developed a standard (CEA-909) for such signals from the DTV receivers to the antenna, but receivers with this interface are rare in the US market. In Europe, a comparable interface is more common. (One wonders if the recent spat between NAB and CEA over FM receivers in cell phones will make this type of interindustry cooperation any easier.)

The MegaWave report ends with these conclusions:

- There have been significant and potentially useful antenna design methods and actual hardware developed over the past 15 years that will improve the performance of low‐profile, compact indoor/set‐top DTV antennas.

- In the area of electro‐magnetic computational and design optimization methods Genetic Algorithms (GA) and the Central Force Optimizer (CFO) have proven themselves as powerful tools in the design of broadband, compact antennas.

- Using the above, we have included an example of an advanced antenna technology, specifically the Fragmented Antenna as part of this project. We submit that it would be nearly impossible for a human to replicate its design and performance.

- The Non‐Foster active broadband matching technology and its required semiconductors have matured to the point where they could be incorporated into a commercial indoor/set‐top design to provide a matched antenna where it is considered as being electrically small. In the example shown below it would be used to provide acceptable performance with the Fragmented dipole within the 54‐88 MHz band. Other element geometries are also possible.

- Many of the other advancements listed in Table 1‐1, specifically 2.4 through 2.6, require a CE‐909‐A type interface with the DTV receiver, and while potentially useful if and when manufacturers start adding this feature to their products they offer no practical improvement for the near term.

- The technologies designated as 2.7 through 2.10 while interesting are either too embryonic (2.7 & 2.8), too complicated and too large (2.10) or not well vetted (2.9) to be considered in the near term.

Congratulations to NAB for this technological tour de force.

FCC Website Clutter

In October 2007, this blog described the clutter on the FCC website and compared it to other agencies.

Among the points made at the time nearly 3 years ago were the following:

1) Search engine.

2) Clutter, clutter everywhere. Apparently there is no self control at all on putting more information or more links on the FCC home page.

- Too many links for the same document.

- No other federal commission clutters its home page with individual commissioners's links.

3) Is anyone in charge here?

4) Difficulty of finding information without prior details.

5) Lack of links to specific FCC rules or statutes.

So what has happened since? Well, the search engine has improve a lot. It would be nice to order the results by date, but at least you get results now. At one point searches on “Kevin Martin” got only a handful of links, now searches work reasonably well.

The plot at the top of this page is from the original post and compares the clutter of various agencies’ websites. At the time, FCC had 260 links on its home page, more than any other agencies surveyed, and a total of 1188 words, putting it slightly behind Interior at 1233. But FCC was clearly the clutter leader in the NE corner of the plot!

So where does FCC stand today? While your blogger doesn’t have the patience to analyze the other agencies all over again - it is clear that no other agency has the FCC’s obsolescent cluttered design - today’s results for FCC are 259 links and 1105 words. So a net decrease of 1 link and 83 words. Positive movement in the right direction, but a long way to go!

FCC also remains the only commission is the federal government that clutters its homepage with individual links for each commissioner, not to mention a separate link for “All Commissioners & Press Photos”.

FCC/CGB Secretly Flip-flops on Decade Old SAR Policy

This week there were some unannounced odd changes on the FCC’s Consumer & Government Affairs Bureau’s website of consumer publications. The changes consisted of a rewrite of the “Wireless Devices and Health Concerns” FCC Consumer Facts and the creating of a new one entitled “SAR For Cell Phones: What It Means For You”.

It seems likely that these changes were requested off the public record by CTIA to bolster their case for their law suit with San Francisco over SAR disclosure at retail stores that sell cell phones. First the changes.

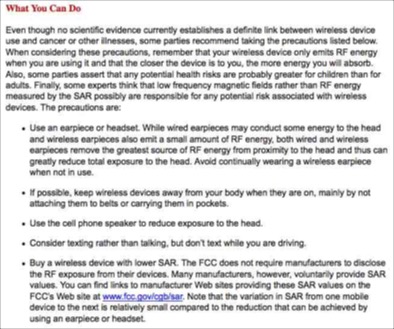

The old “Wireless Devices and Health Concerns” FCC Consumer Facts has now disappeared from the FCC website, but thanks to Google’s caching feature it could be still be found in cyberspace for a few days after the change. It is shown below and linked to the copy that was lurking in the Google cache:

In the old version FCC advises consumers to consider “buy(ing) a wireless device with lower SAR”. This concept goes back to 2000 and was implemented by Chmn. Kennard after the UK adopted a similar policy. At the same time, over CTIA’s strenuous objections, FCC greatly simplified the access to SAR data on its website. (The data had always been there, but it was nearly impossible to find prior to the Kennard directed change to make it more accessible.)

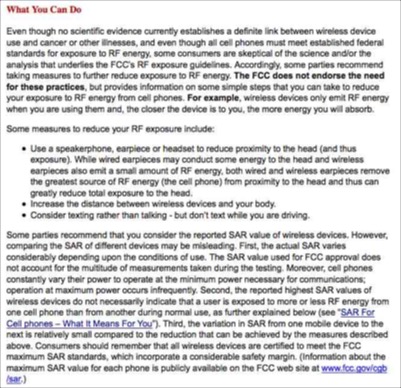

Here is the new version of the fact sheet. Notice the changes?

The “Recent Developments” section of this fact sheet has no information about any change or justification thereof. The CGB homepage has no mention of this topic.

In the new fact sheet, the phrase “some parties recommend taking the precautions listed below” has been replaced with “even though all cell phones must meet established federal standards for exposure to RF energy, some consumers are skeptical of the science and/or the analysis that underlies the FCC’s RF exposure guidelines.” Perhaps will this secretive manipulation of FCC viewpoints, more consumers will be skeptical.

Most significantly the sentence “Buy a wireless device with lower SAR” has disappeared. Then a new paragraph beginning with “Some parties recommend that you consider the reported SAR value of wireless devices” has been added. Who are those “some parties”? Didn’t they include the FCC a mere week ago? Hasn’t this been the FCC position for a decade? Why the sudden change?

CGB has also created, without fanfare, a whole new fact sheet entitled “SAR For Cell Phones: What It Means For You”.

This new fact sheet has a section What SAR Does Not Show. It tries to fuzzify the SAR data by saying

“The SAR value used for FCC approval does not account for the multitude of measurements taken during the testing. Moreover, cell phones constantly vary their power to operate at the minimum power necessary for communications; operation at maximum power occurs infrequently. Consequently, cell phones cannot be reliably compared for their overall exposure characteristics on the basis of a single SAR value for several reasons (each of these examples is based on a reported SAR value for cell phone A that is higher than that for cell phone B)”

I agree with the statement

For users who are concerned with the adequacy of this standard or who otherwise wish to further reduce their exposure, the most effective means to reduce exposure are to hold the cell phone away from the head or body and to use a speakerphone or hands-free accessory. These measures will generally have much more impact on RF energy absorption than the small difference in SAR between individual cell phones, which, in any event, is an unreliable comparison of RF exposure to consumers, given the variables of individual use.

But the attempt to say SAR doesn’t matter at all is bizarre. While one can not meaningfully compare cell phone model A on AT&T’s network with cell phone model B on T-Mo’s based on SAR data, if you are the customer of a specific cellular carrier it is meaningful to compare the SARs of the phone models they offer - if you use your phone without a Bluetooth and in the held to the head mode.

Now cell phone voice minutes are now actually decreasing and the major growth in traffic is in digital information not using cell phones next to the ear. Unfortunately, the focus in this discussion on the head SAR measurement points out a real anachronism in the FCC process for dealing with SAR measurements in OET Bulletin 65, Supplement C (last revised in 1/01). Rather than secretly tweaking consumer guidance, maybe FCC should revise its SAR measurements to show the numbers that matter with respect to the growing type of cell phone use - Blackberry/iPhone-like handheld web/e-mail use. Note that 47 C.F.R. 2.1093(d)(2) gives an SAR limit of 4 W/kg for hands and wrists (vice 1.6 for rest of the body) so today’s models probably are significantly less than the safety limit in the modes in which they are used most of the time.

Earlier this month the Environmental Working Group filed a Freedom of Information Act request with the FCC seeking any records that might show whether the Commission is working with CTIA to challenge a San Francisco law that requires retailers to display radiation information of mobile devices at the point of sale. Is the above change a sign that EWG is right about FCC working secretly with CTIA? Why the FCC secrecy about this policy flip-flop? If FCC now thinks that SAR level is unimportant except for compliance with the 1.6 W/kg limit -- isn’t that newsworthy enough for the ever cluttered FCC home page?

UPDATE

EWG Blog

EWG: FCC downplays cell phone radiation risks

Washington Post article

cnet.com (Owned by CBS)

“Despite the FCC's revision, and the CTIA's stance that studies show no link between long term cell phone use and an increased cancer risk, the debate is not going away anytime soon. Indeed the scientific evidence remains inconclusive and until we know more, CNET will continue to advise concerned users to choose a phone with a low SAR in our cell phone radiation charts.”

OMB Watch

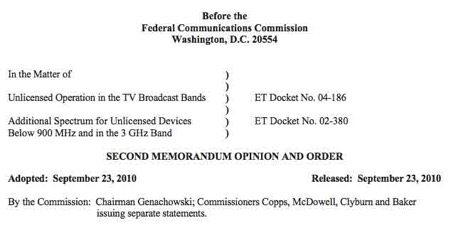

TV White Space Decision is Released

The FCC discussed and approved this morning the above 2nd Memorandum Opinions and Order resolving questions from the TV white space decision of almost 2 years ago. Oddly, if was released under the hyperbole “FCC Frees Up Vacant TV Airwaves For "Super Wi-Fi" Technologies and Other Technologies”. The document is 79 pages without commissioners’ statements.

Chmn. Genachowski oddly said in his statement, “Today’s Order marks the Commission’s first significant release of unlicensed spectrum in 25 years.” Apparently he overlooked the release of 5 GHz in the 59-64 GHz band (later increased to 7 GHz in 57-64 GHz) in the 12/15/95 Report and Order in Docket 94-124 during his first tenure at the Commission under Chairman Hundt.

The new decision removes the sensing requirement from devices that have geolocation and database access to determine spectrum availability. It keeps the archaic R-6602 definition of where a TV signal is present saying “The current method of calculating TV station contours in Section 73.684 of the rules using the FCC curves in Section 73.699 of the rules is straight forward, well understood and has proven sufficiently accurate over time.” (para. 21) So cities like Monterey, California that are within multiple grade B contours but get little real over-the-air TV service are just out of luck.

It did ease use restrictions near the Canadian and Mexican borders in deference to Native American groups.

It guaranteed at least 2 TV channels in every area for wireless mic use and allows extra use for “Broadway-like” shows and special events if they can show their are no other options.

In response to demands by wireless mic advocates that they get even more spectrum, the Commission stated,

We disagree with those who argue that more spectrum should be reserved for wireless microphones. We observe that wireless microphones generally have operated very inefficiently, perhaps in part due to the luxury of having access to a wealth of spectrum. While there may be users that believe they need access to more spectrum to accommodate more wireless microphones, we find that any such needs must be accommodated through improvements in spectrum efficiency.

Still Gloating Over Their AWS-3 Triumph Over Tranparency, Cellular Establishment Has a Disastrous PR Day

Then at 11 PM came the near 3 minute long piece by Liz Crenshaw entitled “ Cell Phone Radiation: Knowing The Number” that stressed the importance of selecting cell phones based on SAR - the number CTIA tried to keep hidden in the late 1990s. To be fair, the report said that cell phone manufacturers believe that as long as a phone meets FCC standards “there is no reason for concern”

Since CTIA is boycotting the whole City of San Francisco over its SAR disclosure ordinance, should they be consistent and take action now against WRC Channel 4 and possibly its parent, NBC Universal? But since NBC Universal, is in turn owned by GE (at least for the moment) shouldn’t they really retaliate against GE? No more cellular ads on NBC, Telemundo, USA, Bravo, etc. and all CTIA members forbidden to buy GE light bulbs. That’s how CTIA can show GE and its subsidiaries who they are trying to kick around! The citizens of SF are feeling the wrath of CTIA, GE should also.

But the next day, Tuesday, got even worse! After all, WRC Channel 4 is only a local TV station is Washington, the the Department of Transportation’s Distracted Driving Summit got national news coverage and 153,000 Google hits as of this writing. DOT reported that “in 2009, nearly 5,500 people died and half a million were injured in crashes involving a distracted driver. According to National Highway Traffic Safety Administration (NHTSA) research, distraction-related fatalities represented 16 percent of overall traffic fatalities in 2009”. Unfortunately, I can not find a quote from Mr. Walls on this issue, but I would be glad to append it if CTIA supplies it. (I would call them directly, but I am annoyed they have not acknowledged or responded to my recent public safety inquiry to 3 high officials there on the upcoming FCC prison/cell phone workshop webinar.)

Meanwhile CTIA’s policy on distracted driving appears to be this statement from their website:

CTIA-The Wireless Association® and the wireless industry believe that when it comes to using your wireless device behind the wheel, it’s important to remember safety always comes first and should be every driver’s top priority. While mobile devices are important safety tools, there’s an appropriate time and an inappropriate time to use them.

The wireless industry generally defers to consumers and the driving legislation they support – whether that’s hands-free regulations or bans on talking on their mobile devices while driving.

At the same time, we believe text-messaging while driving is incompatible with safe driving, and we support state and local statutes that ban this activity while driving.

We also agree with proposals that restrict or limit cellular use by inexperienced or novice drivers. Just as many states have graduated drivers' laws, such as restricting the number of passengers or nighttime hours of driving, the industry believes restricting a young driver's use of wireless while becoming better-skilled at the primary driving tasks makes sense.

Report on Staff Professions in National Telecom Regulatory Agencies

Steve Crowley’s blog has a new post entitled “Staff at the FCC: How Many Lawyers, Economists, and Engineers?” that describes a recent report from the German consulting firm WIK-Consult GmbH (a spinoff from the former W. German Bundespost/PTT). The report, Drivers and Effects of the Size and Composition of Telecoms Regulatory Agencies was written by J. Scott Marcus, a former senior FCC staffer and no relation to your blogger, and Juan Rendon Schneir.

The report is based on data from the following regulatory agencies: Canada (the CRTC), France (ARCEP), Germany (the Federal Network Agency, or BNetzA), the Netherlands (OPTA), New Zealand Commerce Commission (NZCC), Peru (OSIPTEL), Spain (CMT), Sweden (PTS), the United Kingdom (Ofcom) and the United States (FCC). They then tabulate the professional backgrounds of the staff at each agency.

One of the problems with this type of analysis is that the 10 agencies examined have overlapping but different jurisdictions. The Canadian CRTC only has 7 engineers because if doesn’t deal with spectrum policy which is mainly in the jurisdiction of Industry Canada. The French ARCEP is more like the old FCC Common Carrier Bureau - it also has some postal functions - and French spectrum policy is more in the control of ANFR. Thus it is difficult to compare apple with apples, not oranges.

Comparison of professions in national regulators

Based on a FOIA request to FCC, WIK found out that FCC now has 268 engineers, 55 economists and 542 lawyers. Actually I was surprised by this, because throughout most of my career engineers were the most numerous professional group although few were in policy positions. The WIK researchers then got the graph shown at the top of this post which compares ‘senior managers” among the 10 agencies. I suspect OET Chief Julius Knapp is the only engineer senior manager considered in the report, emphasizing the imbalance with respect to regulators in other countries. The WIK report comments “Particularly striking was the skills distribution among senior managers in the United States, where of 23 senior managers who were categorised for this study, 22 were lawyers, only one was an engineer, and none were economists.”

The report makes interesting reading as FCC struggles with how to deal with the technical issues that are at the core of many key telecom issues.

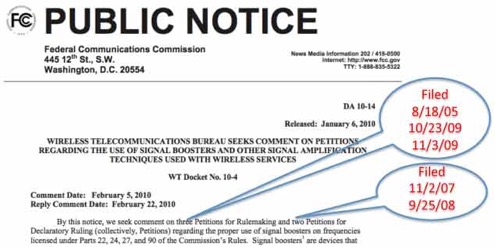

How About Some Transparency in the Handling of Petitions?

The right to petition the federal government is one guaranteed by the 1st Amendment and the APA (5 U.S.C. 553(e)). FCC has implemented this requirement with 47 C.F.R. 1.401. But all this means nothing if petitions fall into an administrative black hole where they can not be seen, let alone acted on!

The top of this post is a January 2010 FCC public notice (PN) asking for comment on 3 petitions for rulemaking and 2 petitions for declaratory rulings. As the annotations in red indicate, the oldest petition involved was almost 5 years old when the PN was released. (As can be seen in the dates above, all these petitions arrive prior to the current Chairman. This is a longstanding problem going back more than a decade.)

I did some discreet inquiries after this PN was issued and was surprised to find out that 8th Floor staffers do not have routine access to lists of petitions that have been filed or even petitions that are siting with action x months after filing. It as not even clear if the Chairman’s Office has routine access to this information. Petitions apparently go to bureaus and offices and just sit around until someone decides to act on them - or not. One always has the options of going to the District Court and asking for a writ of mandamus, but that is possibly the only option. Since the existence of these petitions can be secret, it is difficult for interested 3rd parties to find out about them and try to pressure/embarrass the Commission into acting.

Some modest suggestions:

1. The Commission create an internal tracking systems for such petitions and report all filings to all commissioners within a month of filing.

2. The Commission create a public tracking system, analogous to the FCC Items on Circulation webpage, that documents all petitions more than 3 months old that have not been acted on and makes the text available for public inspection. This need not be a PN requesting comment, just an acknowledgement that the petition has been filed and is under review.

"The FCC should be a model of openness, transparency, and a fair, data-driven process."

Julius Genachowski's confirmation hearing for FCC chairman before the Senate Science, Commerce, and Transportation Committee

(Emphasis added)

IEEE 802.11 @ 20

We have mentioned previously that this is the 25th anniversary year of the Docket 81-413 Report and Order that created the 3 unlicensed ISM bands that are the home of Wi-Fi and a myriad of other unlicensed products. It is also the 20th anniversary of IEEE 802.11, the standards group that created the specification for the ubiquitous Wi-Fi products.

802.11 is meeting in Hawaii this week to continue their handiwork in new and exciting areas and are having a modest celebration of the anniversary. The above Facebook page has anniversary messages from the past and present leaders of the group and they were gracious enough to invite your blogger to contribute also.

So congratulations 802.11 for your success that went beyond anyone’s imagination two decades ago when you began. It certainly is way beyond anything we imagined at FCC when we started down this path in 1979.

UPDATE

Some links on the IEEE 802.11 anniversary meeting:

September 2010, 802.11 20th Anniversary Celebration Photographs

Press release with milestones

“Happy 20th Birthday To The IEEE 802.11 WLAN Working Group” from Electronic Design

Spring 2011 VaTech NoVa Campus Course on Spectrum Policy Issues for Engineers

Your blogger will be teaching a new course at the Northern Virginia Campus of Virginia Tech. The course is

ECE 6604 Advanced Topics in Communications: Spectrum Policy and Wireless Innovation.

DESCRIPTION:

This course will review the legal and technical issues in spectrum management, focusing on the issues involved in bringing a new radio technology into operational use in today’s spectrum environment. The discussion will focus on the US regulatory system, but of necessity will include the impact of the International Telecommunications Union and bilateral agreements with Canada and Mexico. The course will use ongoing and recent FCC spectrum proceedings as case studies. Students will be asked to read and discuss actual FCC filings on technical spectrum policy controversies and then write their own draft comments for FCC on their analyses of the issues involved. Students will be encouraged, but not required, to file their analysis of a current issue with the FCC.

- Syllabus

• Goals and History of Spectrum Management – 10%

• Technical Issues in Spectrum Management

- Interference Control & Prevention – 15%

- Spectrum Efficiency – 10%

- Supporting Innovation – 10%

• US Spectrum Management: FCC Legal Issues for Engineers – 20%

• Case Studies of Key FCC Spectrum Management Decisions – 20%

The course will be taught on Tuesday from 4:00 - 6:45 PM beginning January 18.

Readers are encouraged to share this information with colleagues who might be interested.

Your Blogger's Students Would Get a Failing Grade for a Statement Like This; Why is it in an NTIA/CSMAC Draft that has been Approved?

“Some technologies, such as those employing code division multiple access (CDMA) techniques or those employing turbo error correction coding and hybrid retry mechanisms, such as LTE and WiMAX, are designed to operate at negative SNR but regardless, the throughput and capacity of a channel is proportional to the available SNR.” Draft Interim Report of Interference and Dynamic Spectrum Access Subcommittee, Commerce Spectrum Management Advisory Committee, 7/27/10 (p.28)

The above quote is from the draft of the NTIA Commerce Spectrum Management Advisory Committee’s Interference and Dynamic Spectrum Management Subcommittee that was approved “subject to edits” at the 7/27/10 CSMAC meeting in Boulder CO. It was also on p. 25 of the draft presented at the 5/10 meeting. (The final version of this report has not yet been posted on the CSMAC web site so more editing is possible before finalization.)

The CSMAC charter states that that the

“Committee will recommend approaches and strategies to ensure that the United States remains a leader in the introduction of new wireless technologies, while at the same time providing for the expansion of existing technologies and ensuring that the country’s homeland security, national defense, and other critical needs are satisfied.”

As we have written previously, the tone of the reports to date have focused on protecting incumbents from new entrants and putting as many burdens as possible on innovators. “Expanding new technologies” has not received much attention.

But the point of this posting is not the balance of power between incumbents and innovators. Some issues in spectrum management are subjective and reasonable people could disagree on these. Such issues include things like

- the priority of difference radio services,

- what is acceptable/harmful interference,

- what is the best propagation model for a given environment,

- minimum protection distances between systems,

- use of probabilistic interference models vice worst case (MCL) geometry, etc.

But as a prominent telecom lawyer once told me, “some problems actually have answers”. The quote above is one of those. The answer here is simple: the quote above is technical nonsense!

Your blogger has taught at George Washington U. and MIT and was recently appointed to the adjunct faculty at Virginia Tech. (More soon on the course he will teach there in the Spring Term.) Any student submitting such a statement in a term paper would fail the course.

A first clue that something is wrong can be found by just Googling “hybrid retry mechanism”, as shown below. (Bing could not find any use of this phrase either.)

Now for the ability of LTE and WiMAX to “ to operate at negative SNR” (signal-to-noise ratio) that is, where the desired signal is weaker than the background noise or interference - this is total nonsense that should be obvious to anyone who has studied communications theory in the past 30 years. LTE and WiMAX are both OFDM systems - as is the European DTV standard, DVB-T. WiMAX has several modulation schemes and picks the best one for a given location. Their performance as a function of SNR is shown below:

WiMAX bit error rate (BER) vs. signal-to-noise ratio (SNR)

All need positive SNR to meet the BER (bit error rate) target! This is not “rocket science”. This is stuff taught to undergraduate electrical engineering students (and probably should be on amateur radio tests if the testing were not controlled by Social Security recipients). If LTE and WiMAX could operated at negate SNR, wouldn’t you think that DVB-T, its technical cousin, could also? Therefore wouldn’t DVB-T be better than the US’ ATSC DTV? It really isn’t difficult to see how foolish this statement is.

Thee sentence in question was not an last minute addition to the 7/10 CSMAC draft. As stated above, it was also on p. 25 of the draft presented at the 5/10 meeting. So 2 months after this first draft was presented, the CSMAC approved the report “subject to edits” without any dissent within the committee. Does anyone read this stuff?

Your blogger has urged both FCC and NTIA to balance their advisory committee with technical people with significant peer recognition such as being IEEE fellows or members of the National Academy of Engineering. Clearly NTIA did not listen and this is an example of the result.

NTIA has to reappoint people to the CSMAC soon and FCC is still contemplating TAC nominations. Yes, the TV broadcasters and the major cellular carriers deserve a place at the table, but the price of admission should be sending someone who is technically competent in contemporary communications technology. Do we really need advisory committees made mostly of lobbyists with little or no technical background?

Spectrum policy is too important to be left mostly to lawyers.

[Note: I attempted twice to contact a senior technical staffer at MSTV,

the organization of subcommittee chairman Donovan and

“the recognized industry leader in broadcasting technology and spectrum policy

issues”, to ask about this sentence in the report.

Neither inquiry was returned.]

GNTEBHXBDBW2

NYT: 9 Years After 9/11, Public Safety Radio Is Not Ready

Adm Barnett is quoted telling a Congressional hearing

“For a brief moment in time, a solution is readily within reach. Unless we embark on a comprehensive plan now, including public funding, America will not be able to afford a nationwide, interoperable public safety network.”

Previously, we have described here the analogy to the Eisenhower-era Interstate Highway System where a common network of roads was built by providing federal funding and basic design controls. States did not have to accept the money and hence retained their 10th Amendment rights.

Lest you think I am unkind criticizing industry manipulation of public safety officials, the Times has this quote from Deputy Chief Charles F. Dowd of the New York Police Department’s communications division,

We recently discussed here the media campaign of the National Sheriff’s Association and questioned whether it had a grass roots base or just a few corporate supporters.“The history of public safety is one where the vendors have driven the requirements. We don’t want that situation anymore. We want public safety to do the decision making. And since we’re starting with a clean slate, we can develop rules that everybody has to play by.”

The article ends,

(T)he window to plan a next-generation broadband system is starting to close. “There is nothing that is inevitable about having a nationwide, interoperable system,” Mr. Barnett told Congress this summer. “Indeed, the last 75 years of public safety communications teaches us that there are no natural or market forces” that will make it happen.

This is too important an issue to drag one for another decade or too or to pander to selfserving corporate interests and their pawns in the public safety area.

![Validate my RSS feed [Valid RSS]](valid-rss-rogers.png)